Learning Skeletal Articulations with Neural Blend Shapes

This repository provides an end-to-end library for automatic character rigging and blend shapes generation as well as a visualization tool. It is based on our work Learning Skeletal Articulations with Neural Blend Shapes that is published in SIGGRAPH 2021.

Prerequisites

Our code has been tested on Ubuntu 18.04. Before starting, please configure your Anaconda environment by

conda env create -f environment.yaml

conda activate neural-blend-shapes

Or you may install the following packages (and their dependencies) manually:

- pytorch 1.8

- tensorboard

- tqdm

- chumpy

- opencv-python

Quick Start

We provide a pretrained model that is dedicated for biped character. Download and extract the pretrained model from Google Drive or Baidu Disk (9ras) and put the pre_trained folder under the project directory. Run

python demo.py --pose_file=./eval_constant/sequences/greeting.npy --obj_path=./eval_constant/meshes/maynard.obj

The nice greeting animation showed above will be saved in demo/obj as obj files. In addition, the generated skeleton will be saved as demo/skeleton.bvh and the skinning weight matrix will be saved as demo/weight.npy.

If you are interested in traditional linear blend skinning(LBS) technique result generated with our rig, you can specify --envelope_only=1 to evaluate our model only with the envelope branch.

We also provide other several meshes and animation sequences. Feel free to try their combinations!

Test on Customized Meshes

You may try to run our model with your own meshes by pointing the --obj_path argument to the input mesh. Please make sure your mesh is triangulated and has a consistent upright and front facing orientation. Since our model requires the input meshes are spatially aligned, please specify --normalize=1. Alternatively, you can try to scale and translate your mesh to align the provided eval_constant/meshes/smpl_std.obj without specifying --normalize=1.

Evaluation

To reconstruct the quantitative result with the pretrained model, you need to download the test dataset from Google Drive or Baidu Disk (8b0f) and put the two extracted folders under ./dataset and run

python evaluation.py

Blender Visualization

We provide a simple wrapper of blender's python API (>=2.80) for rendering 3D mesh animations and visualize skinning weight. The following code has been tested on Ubuntu 18.04 and macOS Big Sur with Blender 2.92.

Note that due to the limitation of Blender, you cannot run Eevee render engine with a headless machine.

We also provide several arguments to control the behavior of the scripts. Please refer to the code for more details. To pass arguments to python script in blender, please do following:

blender [blend file path (optional)] -P [python script path] [-b (running at backstage, optional)] -- --arg1 [ARG1] --arg2 [ARG2]

Animation

We provide a simple light and camera setting in eval_constant/simple_scene.blend. You may need to adjust it before using. We use ffmpeg to convert images into video. Please make sure you have installed it before running. To render the obj files generated above, run

cd blender_script

blender ../eval_constant/simple_scene.blend -P render_mesh.py -b

The rendered per-frame image will be saved in demo/images and composited video will be saved as demo/video.mov.

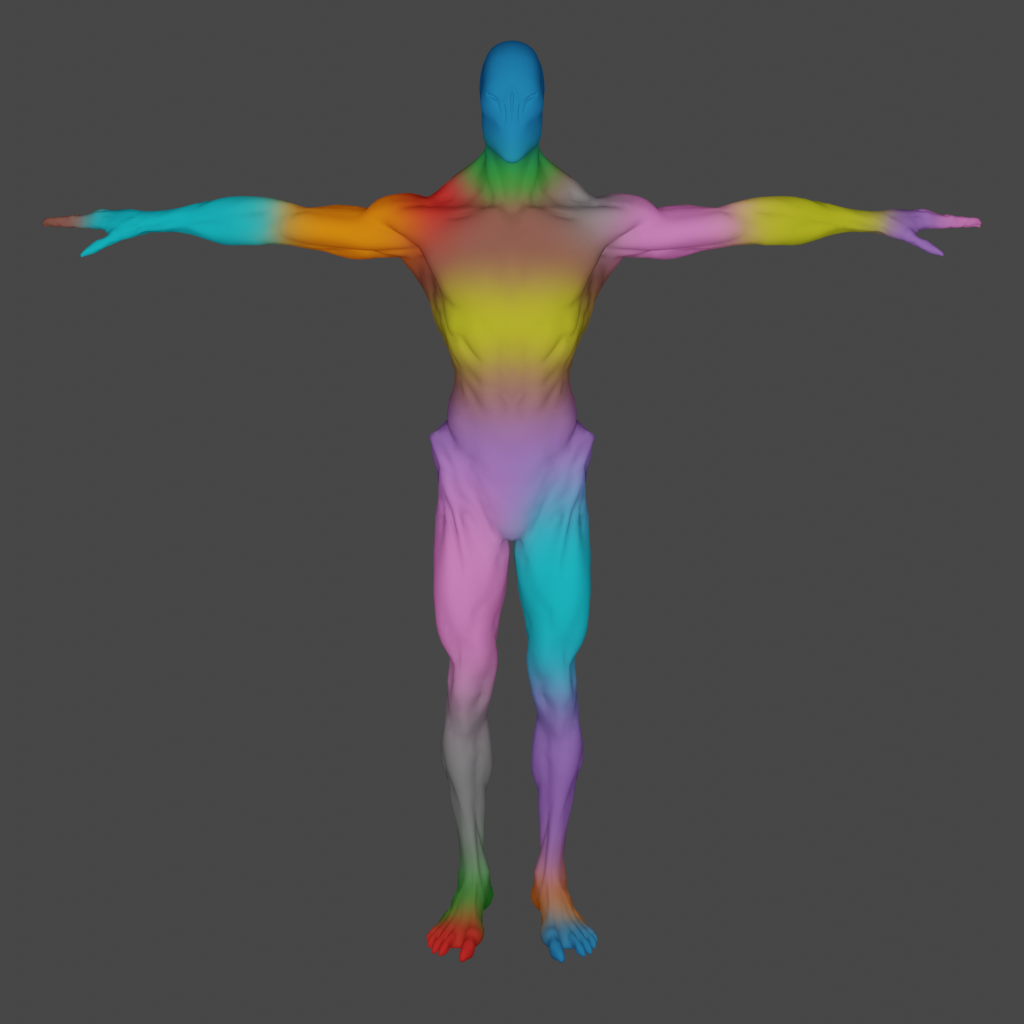

Skinning Weight

Visualize the skinning weight is a good sanity check to see whether the model works as expected. We provide a script using Blender's built-in ShaderNodeVertexColor to visualize the skinning weight. Simply run

cd blender_script

blender -P vertex_color.py

You will see something similar to this if the model works as expected:

Mean while, you can import the generated skeleton (in demo/skeleton.bvh) to Blender. For skeleton rendering, please refer to deep-motion-editing.

Acknowledgements

The code in meshcnn is adapted from MeshCNN by @ranahanocka.

The code in models/skeleton.py is adapted from deep-motion-editing by @kfiraberman, @PeizhuoLi and @HalfSummer11.

The code in dataset/smpl_layer is adapted from smpl_pytorch by @gulvarol.

Part of the test models are taken from and SMPL, MultiGarmentNetwork and Adobe Mixamo.

Citation

If you use this code for your research, please cite our paper:

@article{li2021learning,

author = {Li, Peizhuo and Aberman, Kfir and Hanocka, Rana and Liu, Libin and Sorkine-Hornung, Olga and Chen, Baoquan},

title = {Learning Skeletal Articulations with Neural Blend Shapes},

journal = {ACM Transactions on Graphics (TOG)},

volume = {40},

number = {4},

pages = {1},

year = {2021},

publisher = {ACM}

}

Note: This repository is still under construction. We are planning to release the code and dataset for training soon.